A recent Los Angeles Times article’s headline boldly stated, “AI therapy isn’t getting better. Therapists are just failing.”

A warning shot for psychotherapists, sounded loud and clear. Therapists take heed, that’s the implied message. A bold attack indeed on a community that diligently seeks ways to heal and soothe thousands of suffering souls, utilizing myriad and ever-expanding treatments. A community already finding itself circumvented by the cheap and easy remedies on offer from generative artificial intelligence.

Time is not on the side of the therapeutic world these days: The trend toward evidence-based outcomes; the demands of health insurers; the expectations of instant gratification. These all clash with the ideal of therapeutic “wholeness” (Heinz Kohut’s “relationship cure”).

The honoring of “slow and steady wins the race” combined with the delicate dance of a relational healing becomes increasingly difficult for all parties.

The opinion piece, by psychotherapist and author Jonathan Alpert, calls for a more proactive, solution-focused approach to treatment, which for some may be the missing cure-all. This, however, seems misguided in its lack of understanding of the impact of various aspects of trauma experienced by many.

So often the wounded and hidden parts of humans have debilitating effects. “Too much too soon” in therapy becomes damaging and at times dangerous. Targeting trauma with set goals and interventions, while useful at times, fails to grasp the beneficial impact of a therapist actively listening, creating for the patient a unique experience of being seen and understood, perhaps for the first time.

In the early stages of a therapeutic relationship, the incorporation of suggestions for growth becomes a delicate, necessary maneuver, one requiring collaborative feedback between patient and therapist. Each step of the way is based on readiness, openness and inclusive patient feedback.

There is a fine line between challenging a patient’s protective resistance, and a positive impact developed through sustained empathic inquiry. Another viable approach comes via the contemporary style of motivational interviewing, with the inclusion of relational, collaborative and empathic attunement.

Threading history to present patterns of behavior and experiences remains critical. Recognizing and bringing into awareness such repetitive patterns and themes provides illumination. This ultimately offers choices absent in childhood. The opportunity to grieve losses of possible abusive painful experiences serves to loosen the grip of frozen trauma states.

If the impact of past repeating relational patterns in the present continues to be pre-reflectively enacted, helpless carried shame likely persists. Self-demeaning or annihilating behaviors forged by experiences originating from childhood trauma often cause retreats within the psyche, into the illusion of a “safe space in cold storage” (the term coined by Donald Winnicott, the renowned British pediatrician).

This is one case for focused and flexible human therapists in the new age of AI and its chatbots.

ChatGPT not a viable option

Patients turning to AI, typically via generative bots such as ChatGPT, can be easily manipulated by their skewed preprogrammed information. As this robotic information presents itself to the vulnerable seeker of help and healing, unrealistic hopes and unwanted outcomes may result. ChatGPT can be so persuasive. We all see its great potential and utility, but maybe not its hazards.

AI lacks nuanced crucial dimensions in its approaches, ones that only a focused human collaborative experience can provide. This failing is deeply concerning. The bot-minions of AI, with their occasional hallucinations and curious use of language, cannot and should not preside over therapeutic experiences. That would be a calamity.

While snippets of support may be present, chatbots’ mental-health advice (largely scraped from random sources on the Internet) can be dangerous and ill-informed.

A New York Times survey found recently that the majority of diagnoses obtained from chatbots were in error. Yet millions of us persist in obtaining medical advice from them, for better or worse. Some go so far as to upload lab results, doctors’ notes and medical images in search of guidance. In many cases, this is folly.

A human direction

AI already has become adept at fooling society and its most vulnerable souls with fantasy and idealization within the security of a disconnected relational world.

Navigating the complexity of relationships requires human beings who are present to receive and repair ruptures, hold deep emotions with empathic care and contextual understanding from a contemporary purview combined with the requirement for both parties to be on a path of growth and reflection for optimum outcomes of treatment.

Psychotherapy must hold a firm and steady footing as AI breathes into our therapy spaces. We must tread skillfully with a focus on empathic attunement and contextual meanings made of a unique life.

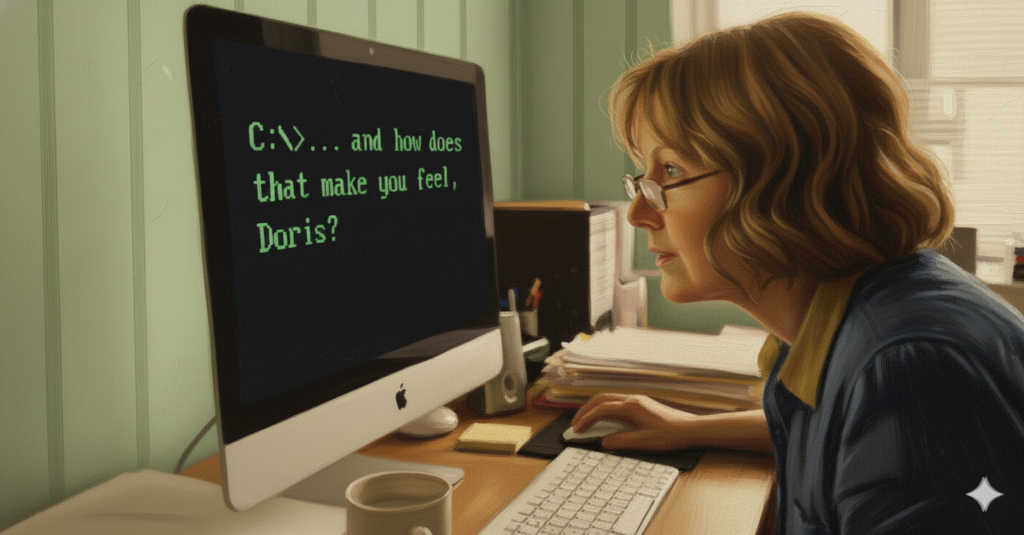

AI photo illustrations via Gemini